Brain Simulation Library on HPC Platforms and Brain Experimentation Support

Motivation for Brain Emulation

The United States National Academy of Engineers has already classified brain emulation as one of the Grand Engineering Challenges Brain emulation in-silico is a relevant research field for various reasons:

The United States National Academy of Engineers has already classified brain emulation as one of the Grand Engineering Challenges Brain emulation in-silico is a relevant research field for various reasons:

- The immediate benefit of brain emulation is the greater understanding of brain behavior by simulations based on biologically plausible models. Depending on the complexity of the model it can provide insight on single-cell behavior to network dynamics of whole brain regions without the need for in-vivo experiments This can greatly accelerate brain experimentation and the understanding of the biological mechanisms.

- One important eventual goal of the field is brain rescue. If brain function can be emulated in-silico accurately enough and in real time, it can lead to brain prosthetics and implants that can recover brain functionality lost due to diseases or accidents.

The general goal of the BrainFrame research theme is to apply high-performance, innovative solutions for enabling large-scale, accurate or real-time brain simulations. Furthermore, for leveraging powerful experimental setups or data analysis for amplifying brain research Thus, our activities employ a multitude of HPC and other acceleration technologies such as FPGAs, Dataflow Computing Engines, GPUs and Many-core processors, and — most recently — Cloud computing.

The BrainFrame Framework

Depending on the desired model characteristics, we identify two general types of simulations that are relevant in neuroscientific experiments. The first one (TYPE-I) has to do with highly accurate (biophysically accurate and even accurate to the molecular level) models of smaller-sized networks (>100 and <1000) that requires real-time or close to real-time performance. The second type involves the simulation of large- or very large-scale networks in which accuracy can often be relaxed. These experiments attempt to simulate network sizes and connection densities closely resembling their biological counterparts (TYPE-II experiments – over 1000 neurons). This, in combination to the variety of models commonly used, makes for a class of applications that vary greatly in terms of workload, while also, depending on the case, requiring high throughput, low latency or both. A single type of HPC fabric, either software- or hardware-based cannot cover all possible use cases with optimal efficiency.

A better approach is to provide scientists with an acceleration platform that has the ability to adjust to the aforementioned variety of workload characteristics. A heterogeneous system that integrates multiple HPC technologies, instead of just one, would be able to provide this. In addition, a framework for a heterogeneous system using a popular user interface for all integrated technologies can also provide the ability to select a different accelerator, depending on availability, cost and performance desired. Such a hardware back-end must overcome additional challenges to be used in the field. It requires a front-end which should provide two crucial features:

- An easy and commonly used interface through which neuroscientists can employ the platform, without the constant mediation of an engineer.

- A front-end that can reuse the vast amount of models already available to the community.

The most recent advance in our work has been building from scratch and releasing a unique Cloud-HPC platform under the theme name, BrainFrame Service (2.0), which resides on the Amazon Cloud and supports the elastic deployment of a number of such heterogeneous accelerators, each best-suited (in terms of performance and instance cost) for a different subset of neuronal-model simulations. This back-end is combined with a NeuroML front-end to implement the BrainFrame tool-flow. NeuroML already offers a common interface to popular simulation platforms such as NEURON and NEST as well as newly developing ones that show great future potential. See, below, an overview of the Cloud architecture.

The topics currently worked upon within the BrainFrame research theme are:

- Acceleration of large-scale brain simulations: Focused mainly on cerebellar models, exploring the use of various HPC node technologies such as FPGAs, GPUs, dataflow engines and many-core processors (Xeon Phis) for delivering largely scalable, high-speed simulation platforms.

- Powerful, neuroscience-friendly modeling/simulation Cloud service: Exploration and development of a Cloud service front-end (NeuroML, other simulation tools), middleware and back-end (various heterogeneous accelerators, new neural solvers etc.).

- Novel neural solvers: Exploration of solvers best-suited for robust and rapid brain simulation, parallel solvers, novel neurosimulators etc.

- Brain rescue and brain-machine interfaces: Focused mainly on cerebellar models, attempting brain rescue by closing the loop between faulty brain regions and biologically plausible, computational brain models. This effort is rapidly being enhanced due to the world-leading, functional-ultrasound (fUS)-based technology the lab possesses for imaging the brain, via the CUBE theme.

- Employing current technologies to serve the neuroscientific effort: Research and development of innovative solutions for brain experiments and advance data analysis of online or offline scientific data.

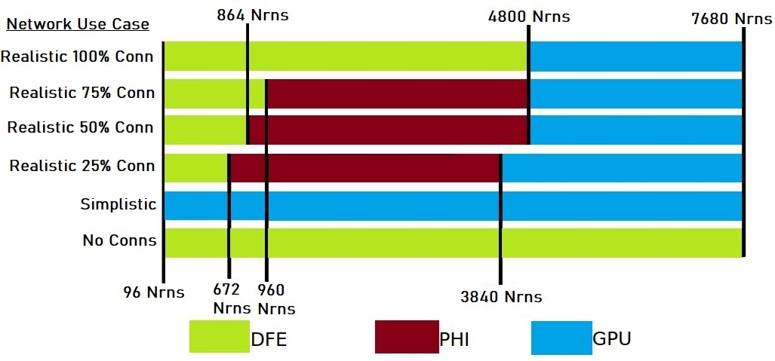

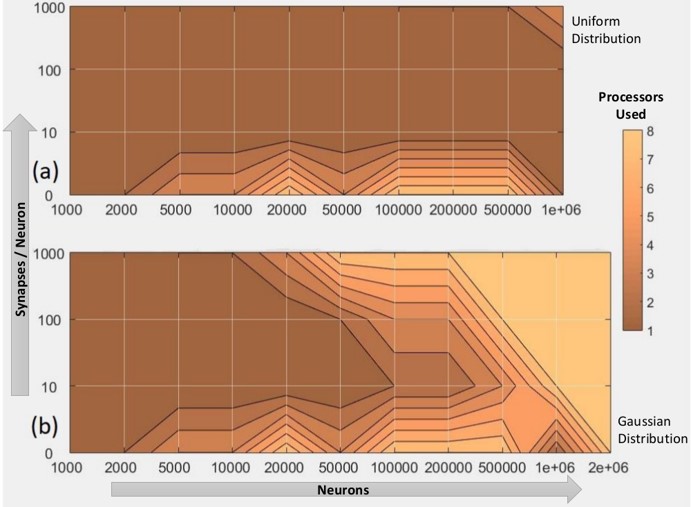

The heterogeneous accelerators provide distinctly different performance results, as a function of the model being simulated as well as the model runtime parameters and the number and type of accelerator(s) used:

Exploration of single Intel Xeon Phi, Nvidia GPU and Maxeler DFE nodes over diverse model parameter settings

Intel Xeon Phi (KNL) single- to eight-node exploration

References

For BrainFrame-related publications, please visit our Publications page.