Overview

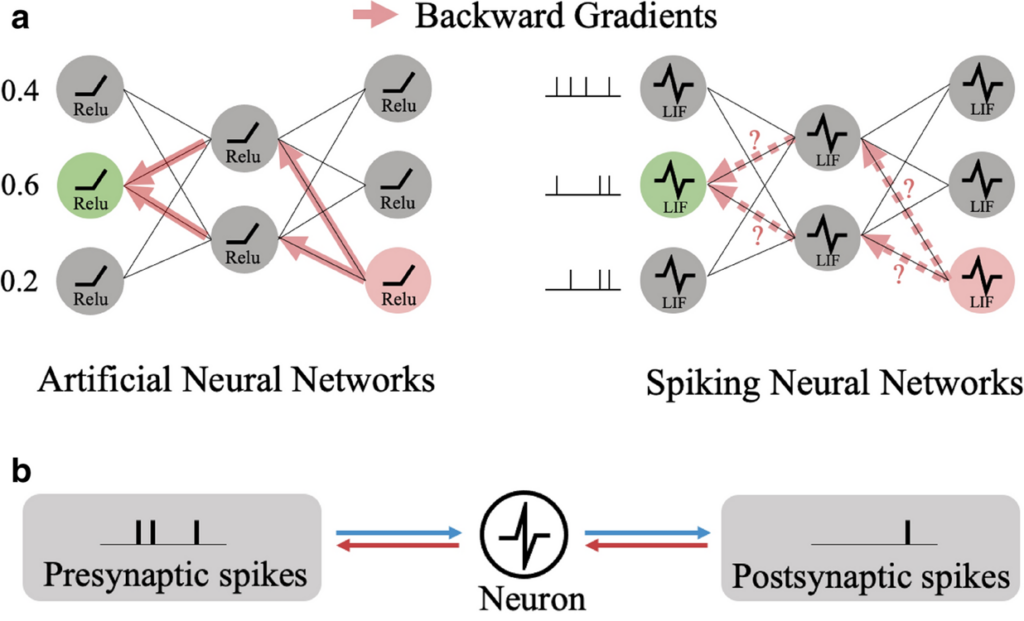

This topic addresses the end-to-end design, optimization, and deployment of spatial spiking neural networks (SpSNNs) on FPGA platforms, with a strong focus on co-design across the full stack—from training methodologies and model structure, to memory organization, compilation, and hardware realization.

Unlike conventional approaches that treat model design and hardware mapping separately, this research line explores a holistic paradigm in which neural computation is shaped by hardware constraints, and hardware architectures are tailored to event-driven, sparse, and spatially structured workloads.

The overarching goal is to enable ultra-efficient, scalable neuromorphic systems by leveraging:

- Spatial locality and structured sparsity

- Event-driven execution models

- Compile-time optimization and specialization

- Tight coupling between training, mapping, and hardware realization

Research Direction

The project investigates how SNNs can be transformed from abstract models into fully optimized, hardware-native implementations, minimizing overheads associated with programmability while maximizing performance, energy efficiency, and scalability.

Key questions include:

- How can neuron placement, connectivity, and spike scheduling be aligned with FPGA architectures?

- How can memory hierarchies (BRAM, distributed memory) be reorganized to match SNN dynamics?

- Can training be adapted to produce hardware-friendly network structures by design?

- How can we compile SNNs into fixed-function, hard-wired FPGA designs?

Available Thesis Projects

SpSNN#1 — Hardware–Software Co-Design of Spatial SNNs

Focuses on joint optimization of SNN models and FPGA architectures.

- Align neuron placement and routing with FPGA topology

- Design execution models for event-driven spike processing

- Explore sparse vs dense computation trade-offs

- Evaluate scalability and performance across architectures

Outcome: Co-design strategies that tightly couple SNN structure with FPGA constraints.

SpSNN#2 — Compile-Time Memory Reconfiguration for SNN Accelerators

Focuses on memory-centric optimization at compile time.

- Map synapses, neuron states, and spike queues to optimized memory layouts

- Explore BRAM partitioning, replication, and scheduling

- Reduce routing congestion and memory access latency

- Achieve timing closure for large-scale sparse SNNs

Outcome: Memory-aware compilation strategies enabling high-throughput event-driven execution.

SpSNN#3 — Hardware-Aware Training of Delay-Based SNNs

Focuses on training methodologies that incorporate hardware constraints.

- Integrate delay distributions, quantization, and sparsity into learning

- Encourage clustered connectivity and shared delays

- Develop regularization strategies for hardware-efficient representations

- Study trade-offs between accuracy and hardware efficiency

Outcome: Training pipelines that produce FPGA-ready SNNs by design, reducing mapping complexity.

SpSNN#4 — Toolflow for Hard-Wired SNN Deployment

Focuses on end-to-end compilation into fixed FPGA implementations.

- Design intermediate representations for SNN-to-hardware translation

- Generate neuron kernels, interconnects, and memory layouts

- Eliminate runtime programmability for maximum efficiency

- Benchmark against programmable neuromorphic systems and software simulators

Outcome: A complete automated toolflow from trained SNN models to hard-coded FPGA accelerators.

IMPORTANT: When applying for a thesis, always attach your CV, course list and course grades to your application so as to ensure best fit with the topic at hand!